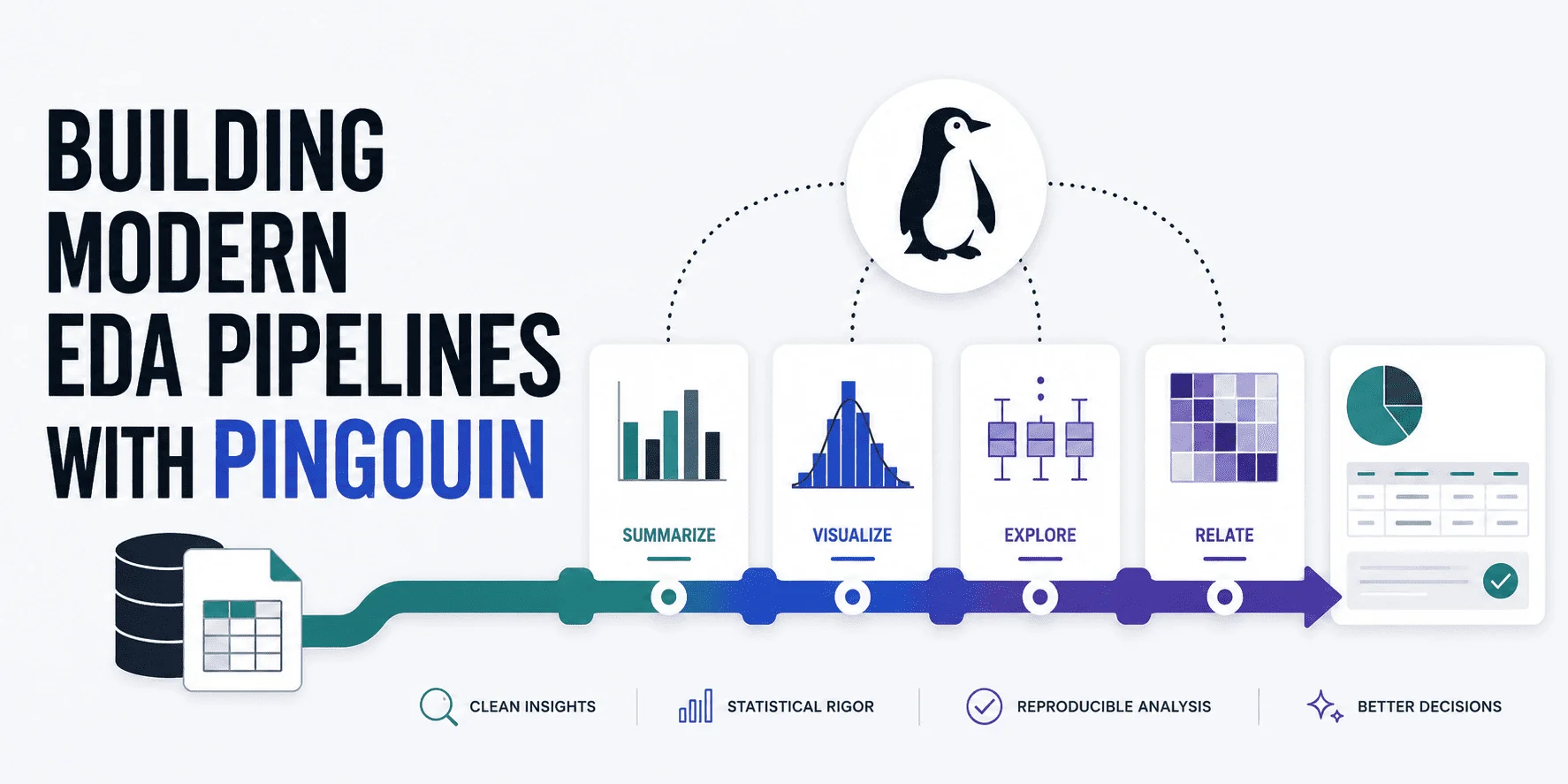

Creating Efficient EDA Pipelines with Pingouin

# The Imperative for Rigorous Exploratory Data Analysis in Machine Learning

The field of data science often highlights a foundational truth: the performance of complex machine learning models is inextricably linked to the quality of the data they are trained on. The notion of "garbage in, garbage out," often abbreviated as GIGO, serves as a stark reminder: poor data leads to poor model performance. As businesses increasingly rely on data-driven decision-making, ensuring the integrity and suitability of raw data for downstream analyses becomes paramount. This is where advanced exploratory data analysis (EDA) tools, like Pingouin, step to the forefront, assisting data scientists in constructing automated, robust EDA pipelines that validate the essential properties of their datasets.

# Pingouin: A Versatile Companion for EDA

Pingouin is a relatively new tool designed to enhance exploratory data analysis with a focus on statistics. Bridging the gap between established libraries like SciPy and Pandas, Pingouin offers an impressive array of statistical tests and data validation techniques conducive to more substantiated analysis. For professionals seeking to optimize their data preprocessing steps, Pingouin enables an efficient workflow, from verifying univariate and multivariate normality to gauging homoscedasticity and sphericity.

# Key Statistical Properties Explored

The efficacy of downstream machine learning processes hinges not just on the presence of data, but on its statistical characteristics. For example, univariate normality is a key assumption for many popular algorithms, such as t-tests and ANOVAs. Using Pingouin's pg.normality() function, data scientists can perform a Shapiro-Wilk test to instantly assess whether continuous variables conform to a normal distribution. However, the inspections don’t end there; multivariate normality becomes crucial as it impacts models using multivariate techniques like MANOVA. The pg.multivariate_normality() test reveals whether the joint distribution of multiple features upholds this requirement, a vital consideration for ensuring model validity.

In the context of a dataset analyzing wine quality, for instance, common attributes such as acidity and alcohol content were found not to satisfy normality requirements across both univariate and multivariate assessments. This suggests a potential need for data transformations, like log transformations, prior to engagement with machine learning models that presume normal distributions.

# Addressing Homoscedasticity and Sphericity

Two additional statistical properties worth assessing are homoscedasticity and sphericity. Homoscedasticity refers to the assumption of equal variances across different groups, an essential component for valid linear regression analyses. By applying Levene's test through Pingouin's pg.homoscedasticity(), users can detect whether the variance of the target variable remains constant across groups, a concept that directly impacts the reliability of predictions. A failure of this assumption may necessitate robust standard errors or the use of models that are less sensitive to variance disparity.

Sphericity, often overlooked but equally vital, assesses whether the variances of differences between all possible pairs of conditions are equal. The implications for techniques such as principal component analysis (PCA) are significant; without sphericity, the interpretability of PCA results diminishes. Pingouin provides tools for evaluating this property, alerting data practitioners to potential pitfalls before diving deeper into dimensionality reduction techniques.

# Tackling Multicollinearity: A Critical Statistical Check

Last but certainly not least in an effective EDA approach is multicollinearity analysis. High levels of correlation between predictor variables can obfuscate model training and weaken interpretability. Pingouin facilitates the evaluation of multicollinearity through its robust correlation matrix, which not only calculates correlation coefficients but also indicates their statistical significance. In practical application, assessing correlation strength is crucial; correlations exceeding 0.8 warrant a cautious approach, potentially guiding model selection towards algorithms that can better handle collinear variables.

# The Path Forward: Automating Your EDA Pipeline

The ability to quickly and effectively validate critical statistical properties of datasets transforms EDA from a routine necessity into a strategic advantage. By deploying Pingouin, data professionals can streamline their analytical workflows, automating critical steps in their EDA pipelines. This not only saves time but also decreases the likelihood of human error, ensuring that insights derived from the data are both accurate and actionable.

As organizations increasingly prioritize data-driven strategies, integrating advanced EDA practices is essential. The insights gleaned from rigorous statistical evaluations can inform superior modeling decisions, safeguarding against reliance on flawed datasets. For practitioners in the data science field, leveraging tools like Pingouin is not just beneficial—it’s imperative for sustaining the integrity and efficacy of machine learning applications.

About the Author

Iván Palomares Carrascosa specializes in AI, machine learning, and data science, empowering industry professionals with the tools they need to navigate complex data challenges.