Optimize Your LLM Pipeline: Efficient Alternatives to JSON

Revolutionizing Data Input with TOON: A Deep Dive into Token Efficiency

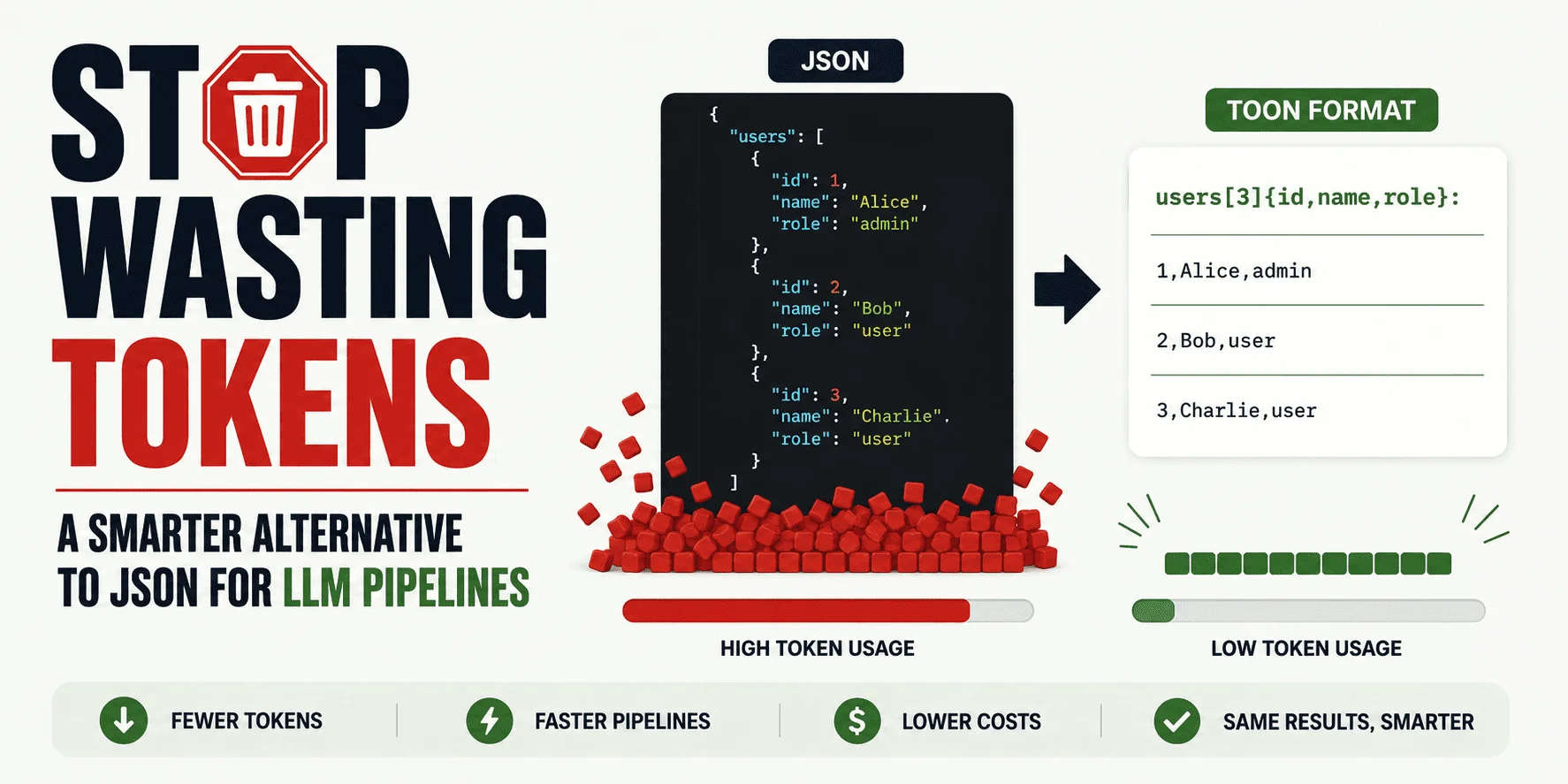

Token efficiency represents a pivotal frontier for machine learning practitioners, particularly when working with large language models (LLMs). The advent of Token-Oriented Object Notation (TOON) brings a compelling alternative to the traditional JSON format that many developers are accustomed to. While JSON has been the go-to format for APIs and data storage, it often introduces unnecessary overhead in LLM prompts—adding weight without delivering proportionate value. This transition is not merely incremental; it addresses fundamental issues of performance in LLM operations that could significantly impact user experience and cost.

The Costly Overhead of JSON in LLM Workflows

At its core, JSON requires repetitive declarations for fields, which not only bloats the size of data sent to LLMs but also dilutes the signal by interspersing structural elements throughout the data stream. This becomes glaringly apparent when submitting a series of similar data records, such as support tickets or user profiles. For instance, imagine processing 100 support tickets, each with the same field names such as "id," "name," and "role." The standard JSON format would repeat this structure across each entry, wasting precious tokens in the process.

TOON cuts through this inefficiency by allowing data to be presented in a more compact format. For example, where JSON structure would repeatedly declare field names, a TOON representation can declare them just once, then list the associated values in a row-like form. This can result in substantial reductions in token counts—something that becomes crucial when dealing with LLMs that charge per token. By minimizing token use while retaining clarity, TOON addresses a significant pain point in LLM input processing.

Understanding TOON: Structure and Applications

TOON retains the essential features of the JSON data model—objects, arrays, and fundamental types—while improving how these are serialized. Importantly, TOON claims to offer a lossless conversion back to JSON, ensuring no data is lost in the transition. You don’t need to overhaul your entire system; TOON is designed to be a conversion tool that integrates into existing workflows without replacing JSON's foundational role in backend operations.

Its primary use cases emerge in scenarios where uniform arrays of objects are consistently passed to LLMs. This includes support ticket reports, product catalog data, and any output that necessitates structured representation. However, it's crucial to approach TOON with discernment—its advantages decrease when dealing with irregular structures or smaller payloads. Thus, the decision to utilize TOON should be guided by the context of the specific tasks at hand.

A Practical Step-by-Step Guide to Implementing TOON

1. Install the TOON Command-Line Interface (CLI)

Getting started with TOON is straightforward, with the TOON CLI readily available for installation. Using npm, a popular package manager, you can install TOON for immediate testing:

npm install -g @toon-format/cli2. Converting JSON Files to TOON

To convert JSON to TOON, you'll first create a file containing your data, then run the conversion using the CLI commands. For a practical session, begin by making a JSON file:

mkdir toon-test

cd toon-test

nano users.jsonInsert your JSON data:

[

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" },

{ "id": 3, "name": "Charlie", "role": "user" }

]Next, convert it to TOON format:

npx @toon-format/cli users.json -o users.toon3. Using TOON as Input to Models

Once the data is in TOON format, it can be easily integrated into your LLM prompts. To illustrate, consider a prompt designed to summarize user roles:

The following data is in TOON format.

users[3]{id,name,role}:

1,Alice,admin

2,Bob,user

3,Charlie,user

Summarize the user roles and point out anything unusual.4. Retaining JSON for Outputs

While TOON streamlines input, JSON remains the preferred format for output because of its established tooling ecosystem and schema enforcement capabilities. Thus, a recommended strategy involves:

- Utilizing JSON in your internal application

- Employing TOON for LLM input

- Converting model responses back to JSON for output

5. Benchmarking TOON's Performance

Adopting TOON should be backed by empirical evidence rather than anecdote. Run small benchmarks that examine token counts, latency, answer quality, and overall cost. This data-driven approach enables you to discern whether TOON genuinely enhances efficiency for specific steps in your LLM workflows rather than accepting blanket superiority claims.

Final Remarks on the TOON Format

TOON is an elegant solution targeting one glaring inefficiency in LLM processing: the excessive use of tokens through repetitive JSON structures. Its design simplicity opens avenues for more efficient data handling in contexts where structured, repeated records are frequent. However, it's vital to acknowledge that TOON isn't a universal remedy. The right tool depends largely on the complexity and nature of the data. Ultimately, employing TOON for repeated structured inputs, while holding on to JSON for outputs, offers a compelling strategy for optimizing LLM interactions. If you're grappling with token cost inefficiencies, it's well worth considering how TOON could transform your data input strategies.