This guide explores the mechanisms behind designing, scaling, and securing tool calls in AI agents. The focal point is ensuring that the interface bridging model reasoning and real-world execution is reliable enough for deployment.

We'll unpack various essential topics, including:

- The significance of the tool calling protocol, which delineates the roles of model reasoning from deterministic execution.

- Strategically scripting tool definitions, handling errors, and crafting parallelization methods that maintain reliability as your agent expands.

- Managing the scope of the tool catalog, safeguarding agent systems, and evaluating tool call effectiveness beyond just task completion.

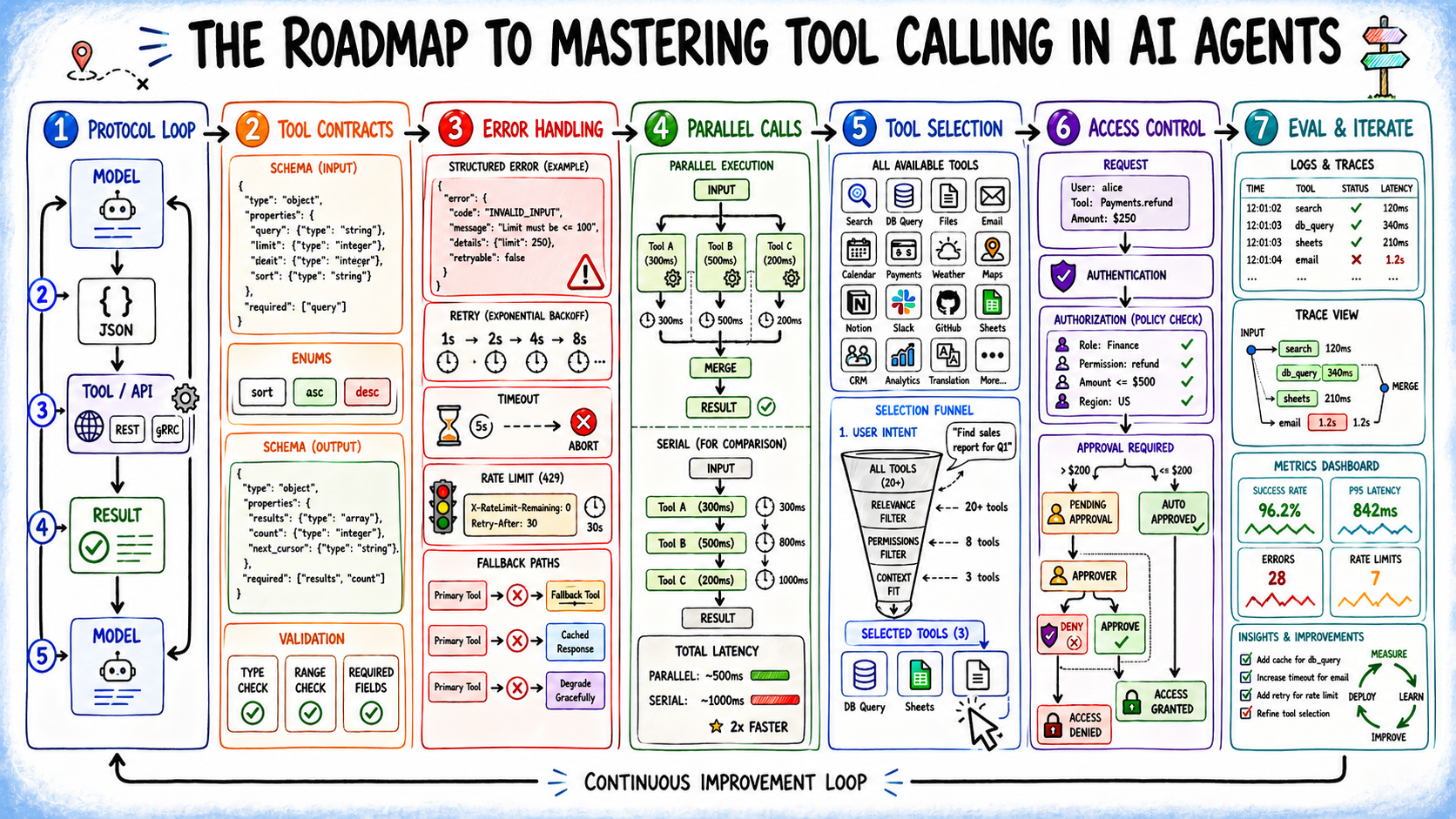

The Roadmap to Mastering Tool Calling in AI Agents (click to enlarge)

Why Mastering Tool Calling Matters

A significant number of failures in AI agents stem from inadequate tool calling rather than flawed reasoning processes. More often than not, an agent will grasp the task at hand yet end up calling an inappropriate tool, misformatting arguments, or encountering an unexpected error that results in an incorrect output. While the reasoning components draw the spotlight, it's the operational tool layer where many issues arise in practice.

Tool calling, also referred to as function calling, is fundamental for translating a language model's reasoning into actionable tasks. Without this link, agents are restricted to their training data, preventing live queries or interactions with external systems. Conversely, an agent equipped with effective tool calling can perform a range of actions—everything from web searches, API calls, running code, to executing transactions across systems that provide an interface.

To achieve successful implementation, it’s crucial to grasp the entire architecture and not merely the optimal scenarios. In the following sections, we will cover pivotal aspects including:

- The tool calling protocol and its importance regarding the execution boundaries

- How to create definitions and error management practices suitable for real-world situations

- Techniques for scaling tool directories and conducting parallel operations without compromising accuracy

- Securing agentic systems and evaluating tool performance beyond simple success metrics

Each segment will address application circumstances, associated trade-offs, and potential pitfalls of overlooking these principles.

Grasping the Tool Calling Protocol

In essence, tool calling in AI agents follows a straightforward cyclical process: the model determines the necessary action, and thereafter, the system implements it.

Initially, you must define tools by compiling a list that includes distinct names, purposes, and structured schemas for input and output. This clear definition establishes the operational boundaries for what the agent can effectively accomplish.

When a user issue is presented, the model evaluates the request and decides whether it can respond autonomously or if a tool's assistance is required. Should a tool be necessary, the agent identifies the most fitting one and generates a structured JSON payload detailing the tool's name along with the relevant parameters.

- Upon receiving the tool call, the system assesses the input

- It executes the specified function or API

- Error handling occurs, and the results are formulated

The resulting data is forwarded back to the model, enabling it to advance its reasoning and produce a conclusive answer. Notably, the model itself does not perform any execution—your application code processes the payload, verifies it, operates the logic, and hands back the outcome as contextual information.

This delineation of roles is vital. The model operates as a non-deterministic reasoner proposing actions, while your application’s code represents the deterministic layer executing these actions. Allowing the model to make assumptions about argument formats, bypassing feedback on results, or neglecting validation can blur this distinction and lead to silent failures when scaled up.

Crafting Effective Tool Definitions

A key factor in ensuring your agent accurately uses tools lies in well-articulated tool definitions. Ambiguous descriptions result in erroneous selections, while loosely specified parameters lead to misguided arguments.

Effective definitions require three crucial components:

- A clearly defined purpose statement with specific boundaries — “Retrieve current or time-sensitive information from the web; avoid using this for queries resolvable through training data” is far superior to a generic “Search the web.”

- Defined and constrained parameters — favor enumerated types over open strings, use identifiable terms the model can intuit from context, and provide explicit examples of expected formats when necessary.

- A detailed output contract — specify what the tool returns, in what format, and illustrate how partial or empty results should be interpreted, allowing the model to base its decisions on actual signals rather than ambiguity.

When tools have overlapping functions, it's essential to establish clear decision boundaries. If you have both knowledge_base_search and web_search, each definition must distinctly indicate their respective uses. Additionally, providing negative guidance—informing the model when not to invoke a tool—helps to prevent unnecessary calls that may delay processing and consume resources.

Conclusion

The tool calling layer is where agent systems operate and deliver real-world impacts. Implementing effective practices includes defining robust contracts for tool use, addressing failures proactively, limiting functionality to necessary capabilities, and continuously assessing what metrics truly matter. As you extend these practices to production scenarios, anticipate and measure their effectiveness—this is the path to creating reliable AI agents.

To recap, here’s what we’ve explored: